Quality Standards for SEO & Digital Marketing: 5 Levers Pros Pull First

Quality standards are the gates that separate tactical activity from durable results. By the end you will be able to apply five operational quality levers—content intent, technical health, link neighborhood, UX conversions, and measurement gates—to vet work, spot red flags, and force predictable outcomes.

What you will be able to do: decide whether a vendor or internal team is following professional quality standards and run a quick 10-minute audit that exposes the most common shortcuts. Read this if you want to stop guessing and start asking the right questions.

Which Quality Standards Professionals Use

Professionals treat quality as a multidimensional gate, not a checklist you tick at the end. The five levers we pull first are content intent match, technical health, link neighborhood quality, user experience for conversion, and measurement integrity. Each lever has an operational threshold and a short list of tools used to verify it.

Why this matters: if one lever fails, the others carry more burden and budget gets wasted. What we usually see on small-to-medium business engagements is technical fixes done first, then content and links slip because the team assumes traffic will follow—traffic rarely does until intent and UX are aligned precisely.

- Content Intent Match — verify the page satisfies the dominant SERP intent for the target query using semantic similarity tooling like OpenAI embeddings or spaCy; we look for a cosine similarity threshold we set as a gate.

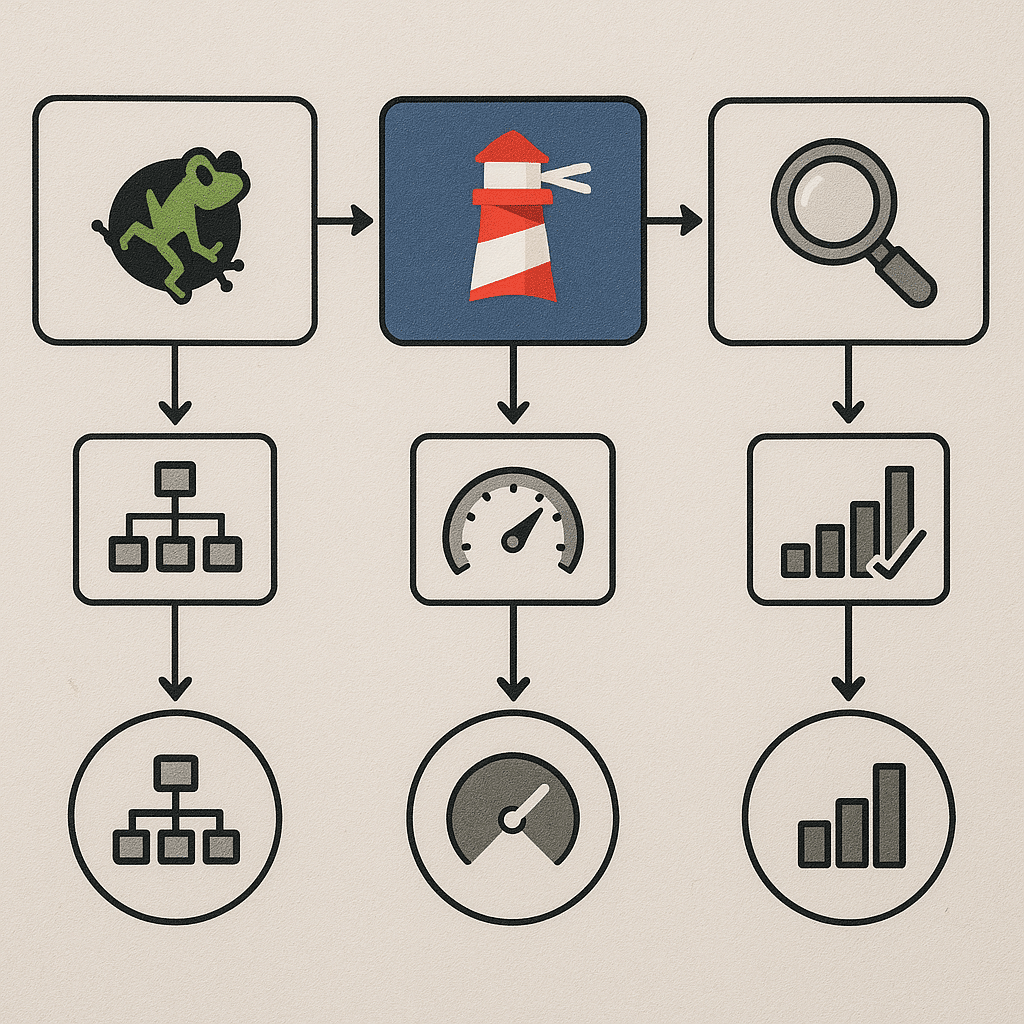

- Technical Health — Core Web Vitals and crawlability checks using Lighthouse, PageSpeed Insights, Screaming Frog, and Google Search Console.

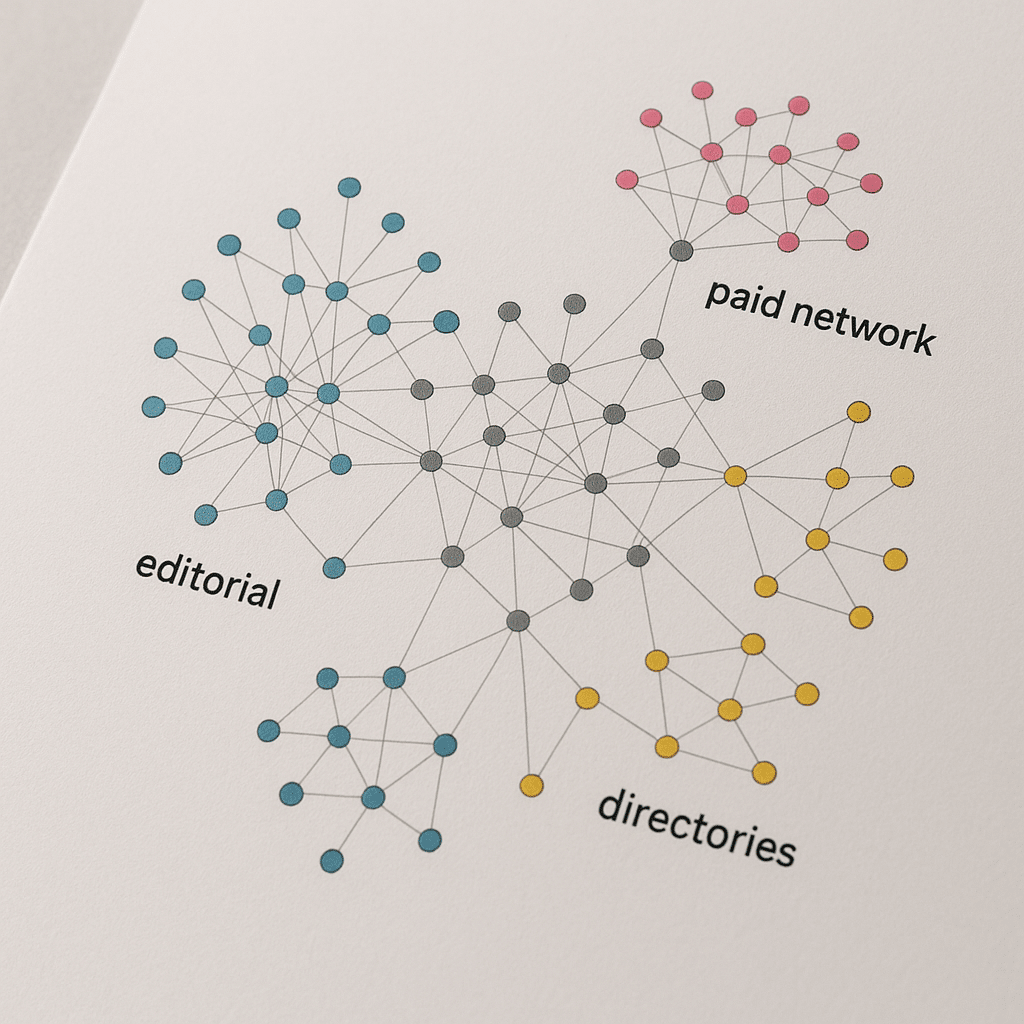

- Link Neighborhood Quality — audit referring domains for topical relevance and co-citation patterns using Ahrefs or Moz and a manual neighbor-graph review to spot paid networks.

- UX For Conversion — template-level evaluation for form speed, CTA clarity, and friction points; run a micro-A/B on page copy or CTA flow before large-scale rollout.

- Measurement Integrity — GA4 tagging, correct conversion modeling, and a test-and-verify period so that metric drift is caught early.

Tools and standards named: Screaming Frog, Lighthouse, Google Search Console, PageSpeed Insights, OpenAI embeddings, schema.org structured data. These are not marketing fluff—pros use them to build objective gates.

How Do You Measure Content Quality?

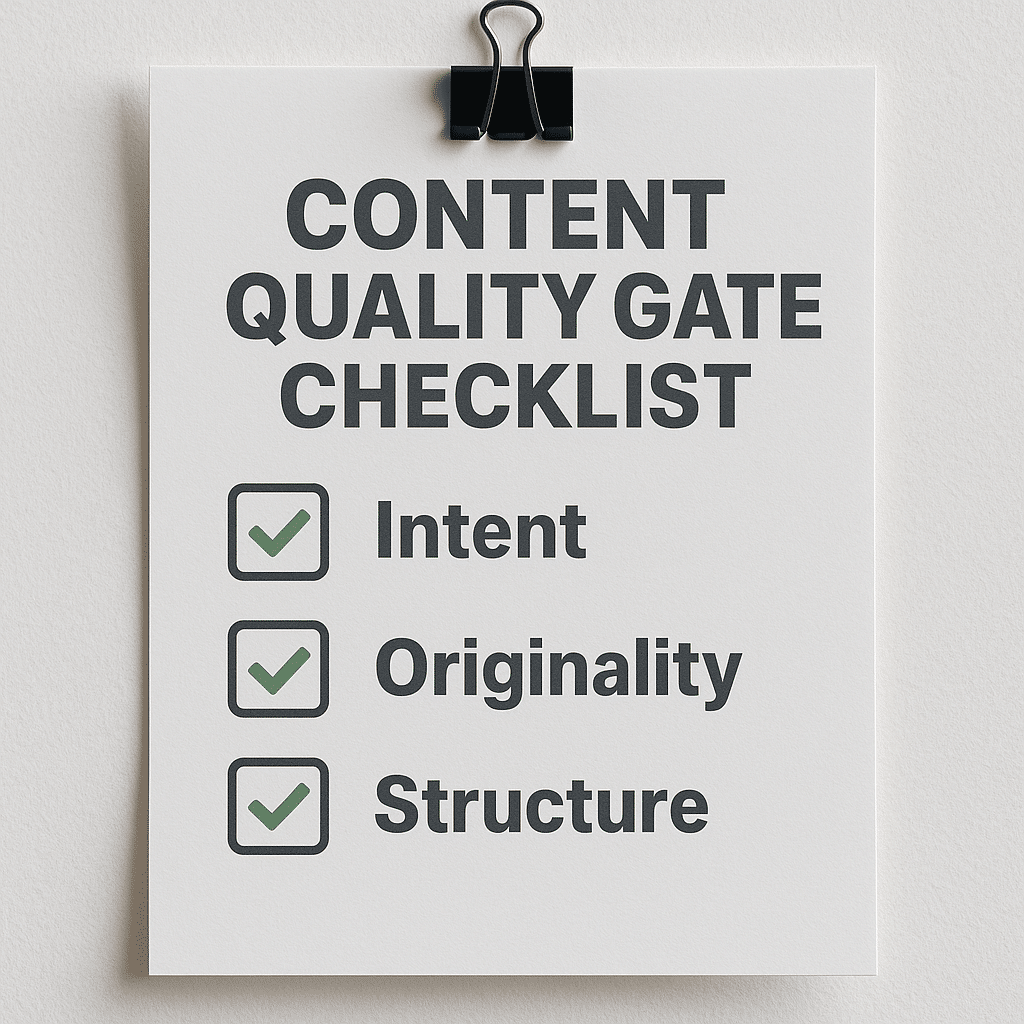

Start with intent match and finish with a unique value check. If a page does not satisfy the intent signal the SERP shows, everything else is noise.

Short answer: Use a three-step gate: intent-match scoring, evidence-of-originality, and structural health; only pages that pass all three are promoted for link-building and paid amplification.

How it works: first compute an intent similarity score between representative SERP snippets and your page headings using semantic embeddings; pros treat a threshold such as roughly 0.7 cosine similarity as a practical gate though you should calibrate to your dataset. Second, require a uniqueness check—one of these must be true: original data, a proprietary process, or a unique UX element such as custom calculators or local inventory. Third, verify structure: H1/H2 hierarchy, schema.org markup, meta tags, and internal linking that reflects content cluster strategy.

What good looks like: a local service page for a 30-page plumbing site in Phoenix that ranks well will show evidence of local signals—named neighborhoods in H2s, service-area schema, two local citations, and a clear booking CTA above the fold. What bad looks like: copy lifted from competitors, generic H2s, and no schema. In practice, we often see vendors publish dozens of thin service pages and then wonder why rankings don’t lift.

What Technical Standards Matter First

Technical health is not binary; prioritize the three technical standards that force the biggest user and crawl wins: Core Web Vitals, indexability, and structured data. Fix those first.

Why these three: Core Web Vitals are measurable user experience signals that Google uses in ranking algorithms, according to Google. The practical thresholds you should verify are Largest Contentful Paint under 2.5 seconds and Cumulative Layout Shift under 0.1, according to Google Web Vitals documentation. Indexability collapses effort — if the bot can’t reach or correctly render the important pages, content work is wasted. Structured data is how you win richer SERP appearances and better CTRs without adding new content.

How to verify: run Lighthouse or PageSpeed Insights for Core Web Vitals, use Screaming Frog to surface crawl issues and redirect chains, and check the Coverage and Enhancements reports in Google Search Console for indexing and schema errors. A practical gate: treat pages that fail LCP or have CLS above threshold as blocked for amplification until fixed.

Failure mode and consequence: ignore a redirect chain and malformed canonical tags and you will create crawling loops and index bloat, which we usually see manifest as an effective loss of organic visibility over a 30-90 day window while search engines re-evaluate index priorities. Fixing it typically requires canonical corrections and 301s plus a measured recovery period during which rankings oscillate.

How Do Pros Validate Backlink Quality?

Backlinks are not just about domain authority metrics; pros examine neighborhood signals, anchor diversity, and editorial intent. A single ‘high DA’ link can be useless if it’s surrounded by spam.

Short answer: Validate referring domains for topical relevance, traffic authenticity, anchor text diversity, and co-citation neighborhoods; reject links that live in dense paid-network clusters or on pages with thin editorial content.

How it works operationally: export referring domains from Ahrefs or Moz and segment by topical relevance and organic traffic. Then perform a neighbor-graph audit: map the outbound links from those referring domains and check whether they point to logically related sites or a pattern of monetized links. We often spot paid networks by clusters of domains that cross-link in non-topical patterns and share identical footer scripts or ad tags.

What good looks like: a handful of links from locally-relevant publishers, industry associations, or niche forums with editorial context and natural anchor text. What bad looks like: a sudden spike in low-quality referrals, identical anchor text across dozens of domains, or links coming from directories with no topical overlap. In practice, recovering from a toxic neighborhood requires link removals and disavowal plus a period of careful outreach, and it can delay SEO momentum.

User Experience Standards That Affect Conversions

UX for SEO is not the same as UX for product. For marketing pages you must optimize the template level first, then the copy. A conversion-focused quality standard catches friction before traffic is amplified.

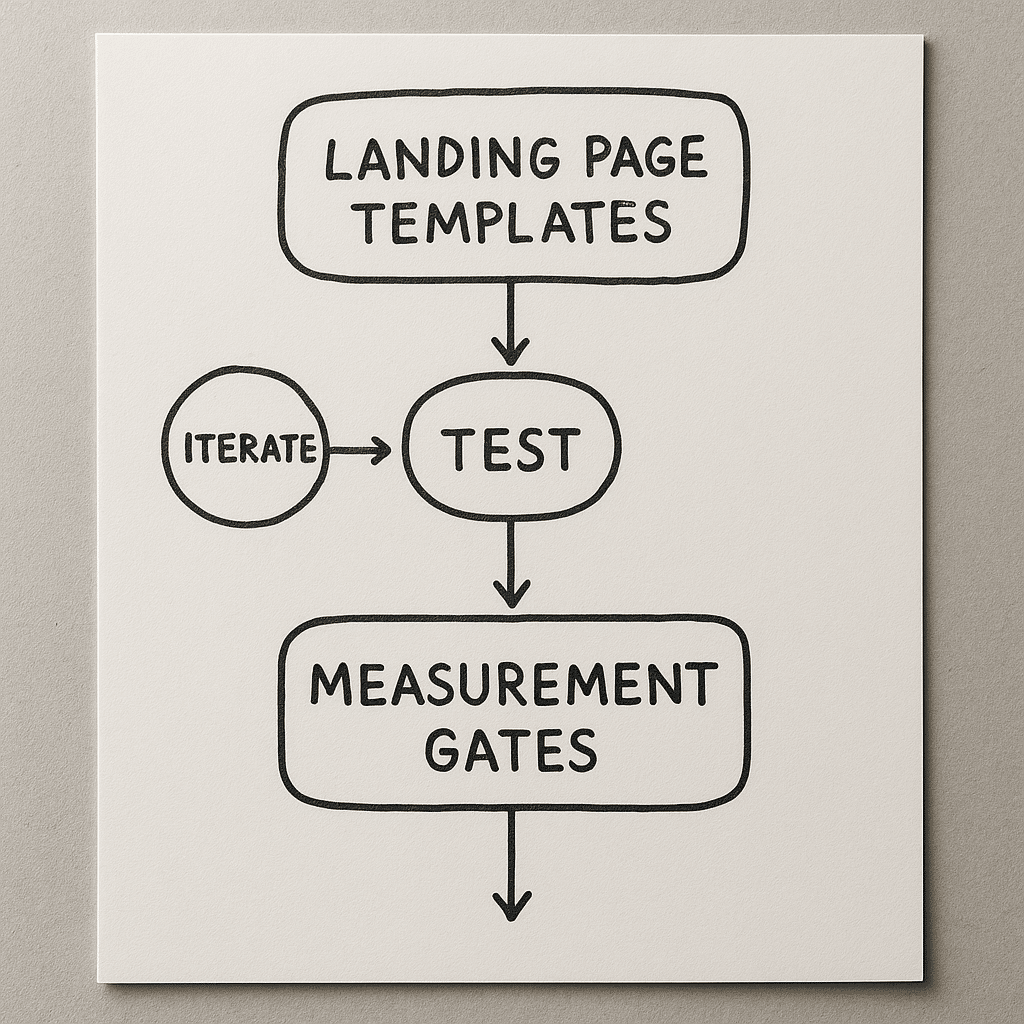

How to measure: run session recordings and funnel analytics to isolate the micro-interactions that kill conversions — slow form validation, excessive modals, or unclear CTAs. A quick numeric gate we use: require the critical form to render and validate in under 1-3 seconds on a mid-tier mobile device. If validation trips or the page triggers unnecessary modals, the page fails the gate.

Scenario: a 12-person agency switching from HubSpot to a custom stack constrained by budget and launch timeline. We treat template parity as a quality standard: the top three landing page templates must replicate current CTA location, tracking, and user journey before redesigns are rolled out. In practice, skipping this step produces conversion drop-offs even when traffic increases.

Failure mode: redesigns that neglect measurement often look good but reduce conversions. We’ve seen templates that improved perceived aesthetics but removed inline reassurances and social proof, causing conversion rates to fall. Always A/B test redesigns on priority pages first.

How to Set Quality Gates Before Publishing

Quality gates are the operational rules that prevent low-quality pages from entering indexation or paid campaigns. Set them, enforce them, and measure compliance.

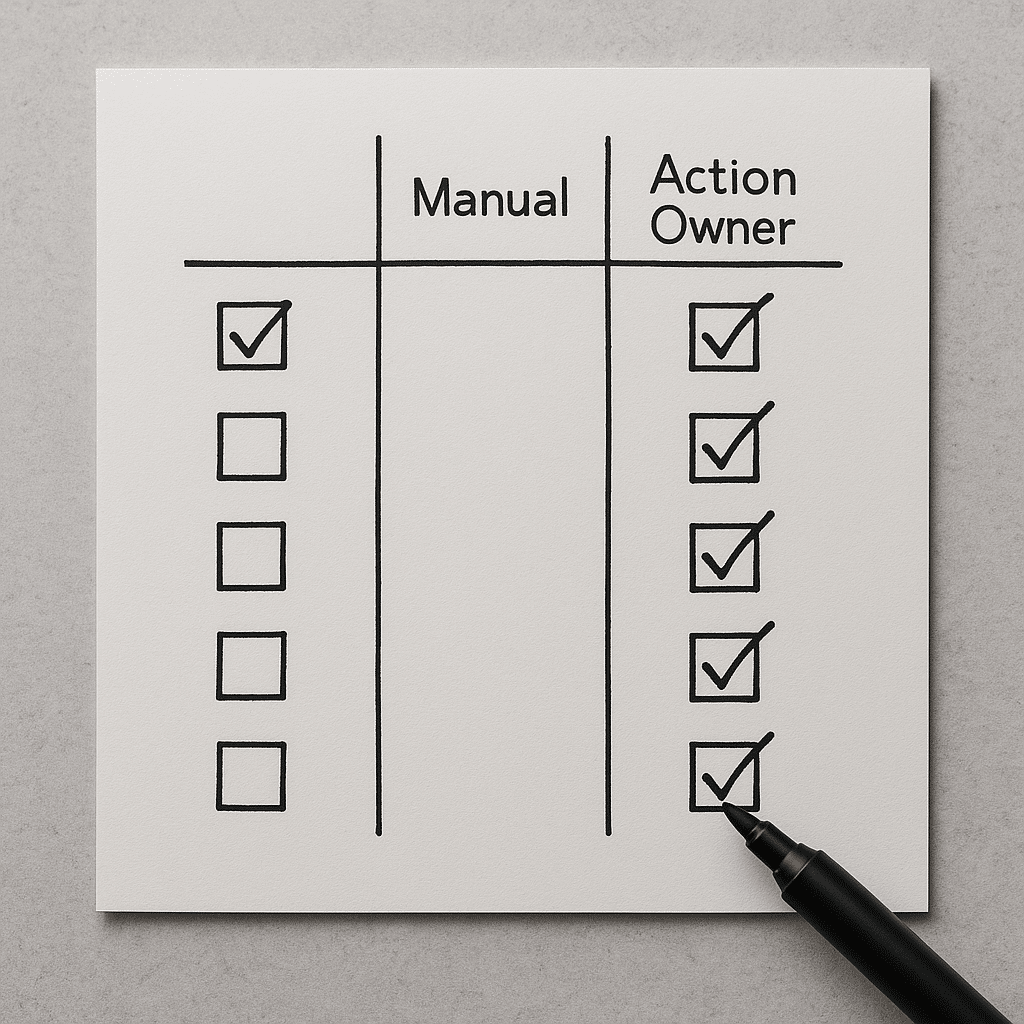

What a gate looks like: a lightweight validation checklist that runs as a pre-publish audit. Examples of gate items include: intent-match score above your threshold, LCP and CLS passing Core Web Vitals thresholds, schema present and validated, canonical configured, internal linking present, at least three credible source links or original data, and GA4 event and conversion firing correctly.

Why this works: it turns subjective quality into objective pass/fail signals. In practice, when teams adopt gates, the time spent fixing post-publish emergencies drops dramatically and budget for amplification is spent on assets that scale instead of remediating basic errors.

Operational template: use a combination of automated checks (Screaming Frog, Lighthouse, schema validator) and a 5-minute human review for intent and originality. Failure mode: skipping the human review lets semantically misaligned pages go live and wastes link-building and paid spend.

Evaluation Checklist: What to Ask When Vetting Quality

Use this checklist in vendor calls or internal reviews. Ask for concrete artifacts, not promises.

- Show me the intent-match method you use and an example score for one of our target queries.

- Give a recent Screaming Frog export and tell me which three issues you will fix first.

- Demonstrate the link neighborhood audit for one referring domain and how you flagged paid networks.

- Provide the Core Web Vitals report for our priority pages; confirm LCP and CLS thresholds and the remediation timeline.

- Share the pre-publish gate checklist and the last three pages that failed it, with remediation notes.

- Confirm GA4 events and conversion attribution, and show a test where you simulated the conversion from ad click to thank-you page.

Red flags to watch for: vague replies such as we ‘improve engagement’ without demonstrating which metrics and how improvement is measured, no sample exports or reports, or an inability to name which pages are gating amplification.

Practical next step: run a 10-minute audit on one high-priority landing page using these checkpoints. If the vendor can’t produce the requested artifacts in 48 hours, treat that as a reliability issue.

Internal links to reference standards and services: read DIQSEO’s approach to SEO Agency In Florida for operational workflows, compare your landing page gating to the Landing Page Design Agency In Florida checklist, and check examples of site redesign gates at Website Redesign Company In Florida. For amplification and paid channels, align quality standards with your paid search setup at Google AdWords Management Services In Arizona and creative gating with Paid Social Advertising Agency In Arizona.

References and Evidence

According to Google Web Vitals documentation, Largest Contentful Paint and Cumulative Layout Shift are part of page experience signals that sites should measure and improve. According to the W3C Web Accessibility Initiative, structured and semantic markup improves machine interpretability and accessibility. According to the Nielsen Norman Group, microinteraction friction like slow form validation significantly reduces conversion rates across devices.

These referenced sources form the backbone of the technical and UX gates we described. Use them to demand evidence rather than slogans in vendor conversations.

External links for deeper reading: the Google Web Vitals documentation for thresholds and definitions, W3C Web Accessibility Initiative for markup and semantics, and Nielsen Norman Group for UX measurement practices.

External resources: Google Web Vitals Documentation, W3C Web Accessibility Initiative, Nielsen Norman Group.

Conclusion: The Single Most Useful Takeaway

Don’t buy activity — buy gates. If a vendor or team can show you the pre-publish quality gate artifacts and a remediation log for past failures, you can trust their outputs more than their promises. Your next action: pick one priority page, run the 10-minute audit from the checklist, and require the top vendor to produce the requested artifacts within 48 hours.

Need help running the audit or translating the vendor artifacts into a roadmap? Ask for a short, paid technical review focused on the five levers in this article.

Frequently Asked Questions

What Are the Most Important Quality Metrics to Track Daily?

Short answer: Track Core Web Vitals for page experience, crawl errors and coverage status in Search Console, and conversion event integrity in GA4; watch for sudden deviations rather than absolute values.

To go deeper, monitor LCP, CLS, and a stable interaction metric, plus Search Console Coverage anomalies and GA4 event drops. Daily monitoring flags regressions quickly so you can roll back changes or push fixes before amplification spends are wasted.

How Long After Fixes Will Rankings Typically Stabilize?

Short answer: Expect an indexing and ranking stabilization window that commonly ranges from 30 to 90 days depending on site size and change scope; technical fixes usually show earlier signal improvements than content changes.

To go deeper, smaller technical fixes that affect bots directly—like fixing robots.txt or resolving canonical chains—often reflect in Search Console within days but ranking volatility can persist while the algorithm re-evaluates relevance. Content changes may take longer because of intent re-assessment and external signals.

Which Tools Prove a Vendor Is Using Professional Quality Standards?

Short answer: Look for evidence of Screaming Frog exports, Lighthouse or PageSpeed reports, Google Search Console coverage and enhancement exports, schema validation, and GA4 conversion test logs.

To go deeper, ask the vendor to walk a page through each tool and show the remediation steps. If they can’t produce a Screaming Frog crawl or a Lighthouse score history, their technical hygiene is likely weak.

Does Schema Markup Actually Move the Needle?

Short answer: Yes, schema doesn’t directly boost relevance but it improves how search engines present your content and can materially increase CTR and qualified traffic when applied to product, local business, and FAQ types.

To go deeper, schema enables rich snippets and knowledge panel eligibility. Use validated schema types from schema.org and check the Enhancements report in Search Console. Remember, schema is a conversion lever more than a pure ranking lever.

How Can I Spot a Toxic Link Neighborhood Quickly?

Short answer: Export referring domains, filter by low topical relevance and low unique content, and inspect clusters that cross-link with identical anchor text—those are high indicators of paid or automated networks.

To go deeper, run a co-citation graph and look for domains that share the same outbound link patterns or ad tags. If multiple referring domains share near-identical footer links or content templates, treat them as suspect and prioritize outreach or disavowal.

What Is a Practical Content Gate for Local Service Pages?

Short answer: Require local signals such as service-area schema, neighborhood-specific H2s, at least one verified local citation, a clear booking CTA, and an intent-match score above your calibrated threshold before promoting the page.

To go deeper, validate each gate item with one artifact: a schema validator screenshot, a sample citation, and your intent-match output. If any artifact is missing, the page should not be amplified with links or paid spend.

How Do I Ensure Measurement Integrity After a Redesign?

Short answer: Create a measurement runbook that includes pre-launch event mapping, a QA checklist for GA4 and tag firing, and a post-launch monitoring window of at least two weeks to catch drift.

To go deeper, simulate conversion flows from ad click to thank-you page and verify events in GA4 DebugView and server logs. Keep a rollback plan that restores previous tracking if conversions drop unexpectedly.