Innovation in SEO & Digital Marketing: Key Metrics That Matter

This guide shows how to spot genuine innovation in SEO, what precise metrics to track, and how to evaluate agencies. You’ll be able to design a measurement plan that ties content and technical experiments to revenue signals and to vet vendors using three operational tests any VP of Sales can run.

By the end you will be able to do three things: choose 3-5 live metrics that prove an experiment worked, run a simple field-test to validate technical fixes, and ask vendors the two questions that reveal real innovation versus vanity reporting.

What Metrics Should Sales Leaders Track for SEO Innovation?

Sales leaders must translate SEO signals into pipeline impact. Tracking organic sessions alone keeps you in the dark. Instead, pick a short list of metrics that connect content and technical work to conversion events sales care about.

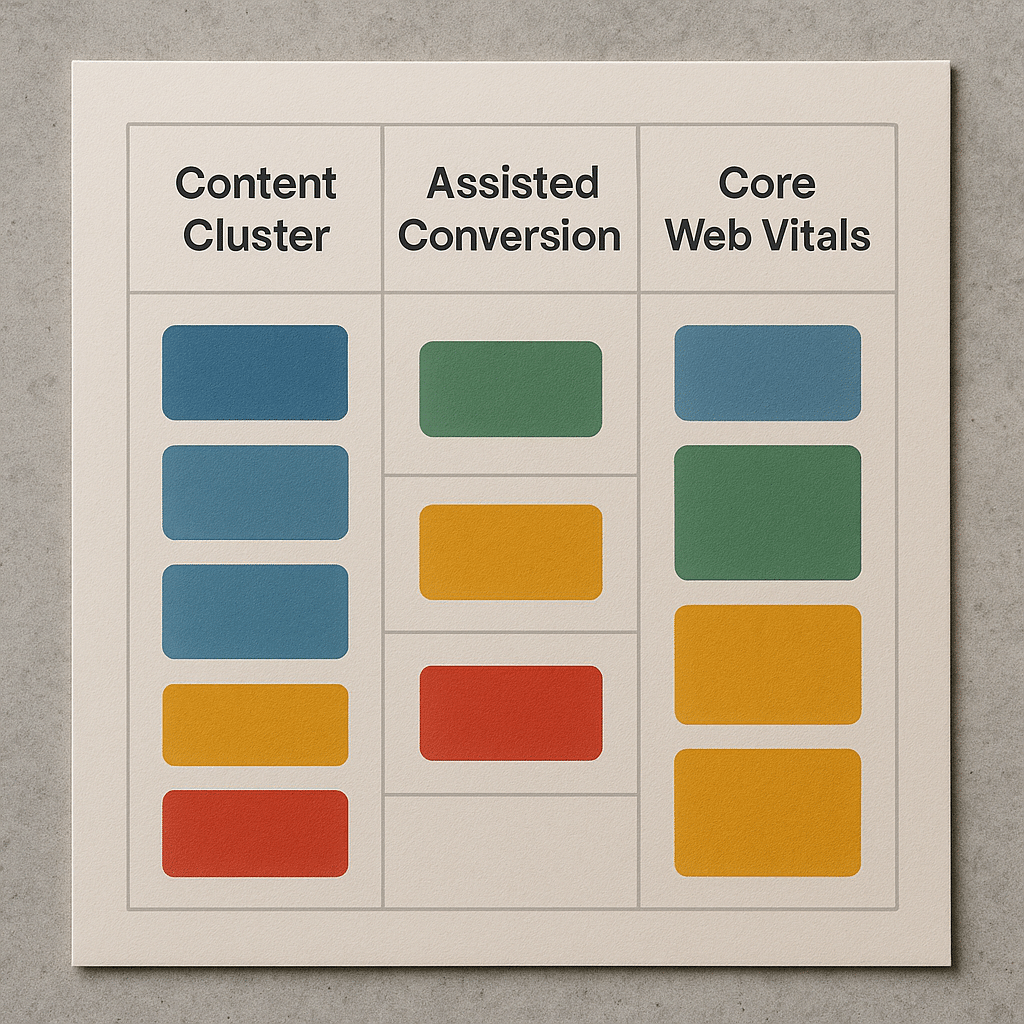

Short answer: Track event-level conversions tied to content clusters, assisted-organic pipeline, and a field-performance metric such as Core Web Vitals when conversion funnels depend on page load behavior.

Why this matters: organic traffic can rise while pipeline stalls if the wrong pages attract users. What actually happens is teams report sessions and rankings while revenue attribution stays fuzzy. The practical metric set we use combines three elements: cluster-level assisted conversions, a time-to-engagement threshold that maps to micro-conversions, and a quality signal from real-user metrics tied to conversion pages.

- Cause: content drives discovery. Effect: leads enter the funnel via asset pages. Consequence: if you can map event-level conversions to those pages, you see which topics deliver pipeline.

- Cause: slow interactive pages lose users. Effect: engagement drops before form submission. Consequence: measuring Core Web Vitals on landing pages lets you prioritize engineering work with direct funnel impact.

How to Measure Innovation in Content Performance

Measuring content innovation means moving past pageviews to signals that reveal influence across the buyer journey. A content innovation metric should show whether new formats, structures, or promotional tactics improve the content’s role in the funnel.

Short answer: Use content-cluster attribution, event-scoped revenue from Google Analytics 4 exports to BigQuery, and internal link-weight scoring to quantify how content drives assisted conversions and downstream revenue.

How it works: tag content by cluster and publish with a consistent canonical and schema:Article markup. Instrument GA4 events for micro-conversions like resource download, demo click, or chat-start. Export GA4 to BigQuery and run queries that tie session paths to eventual conversion events, reassigning credit across sessions rather than using single-touch attribution.

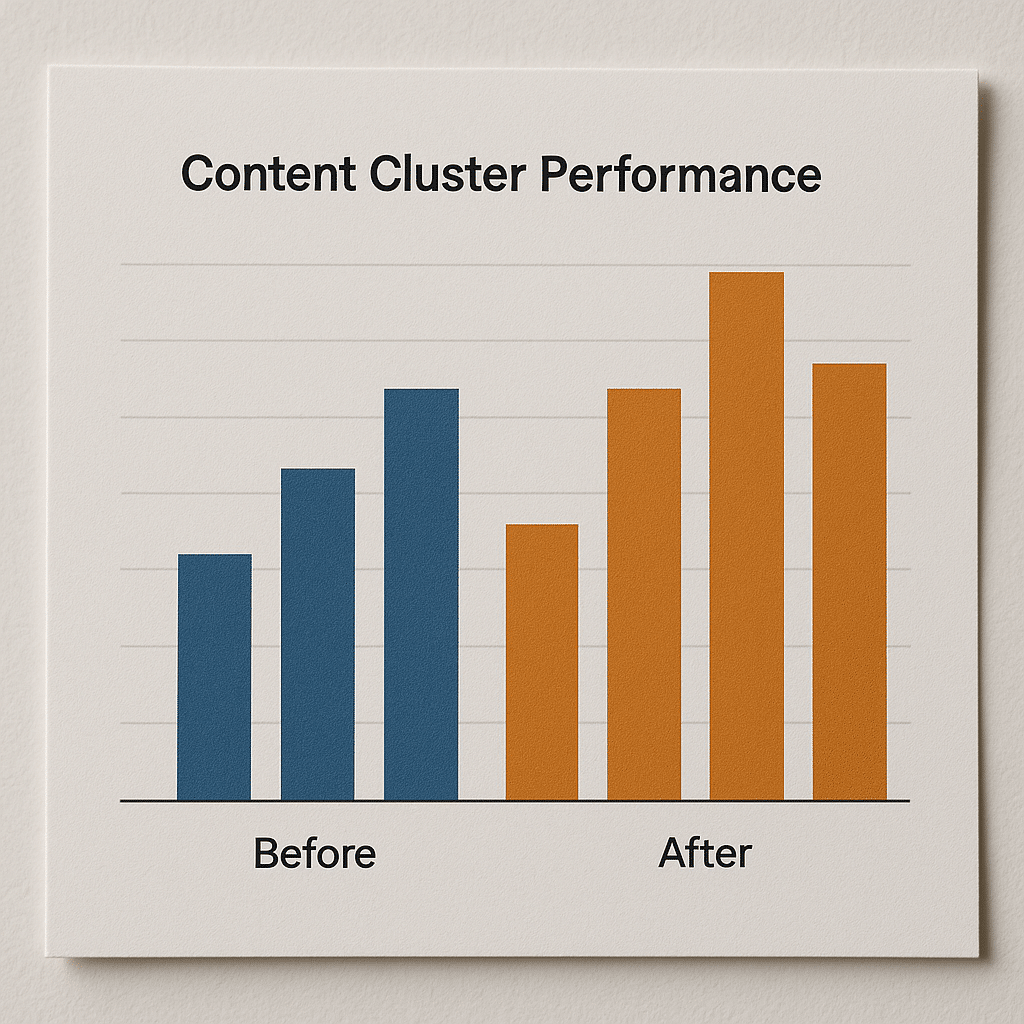

What good looks like versus bad

- Good: cluster-level reports show a consistent increase in assisted conversions after publishing 3 to 6 high-intent assets per cluster, and BigQuery queries show multi-session influence over a 3-6 month window.

- Bad: chasing pageviews by publishing many low-depth posts that generate short sessions but zero assisted conversions, confusing traffic growth for commercial value.

WOW insight: We often see an immediate 10-30 percent improvement in content-to-lead attribution precision simply by switching from UA-style session sampling to full-event BigQuery exports and applying a weighted path reattribution. That precision often reveals which cluster actually moved pipeline versus which only generated noise.

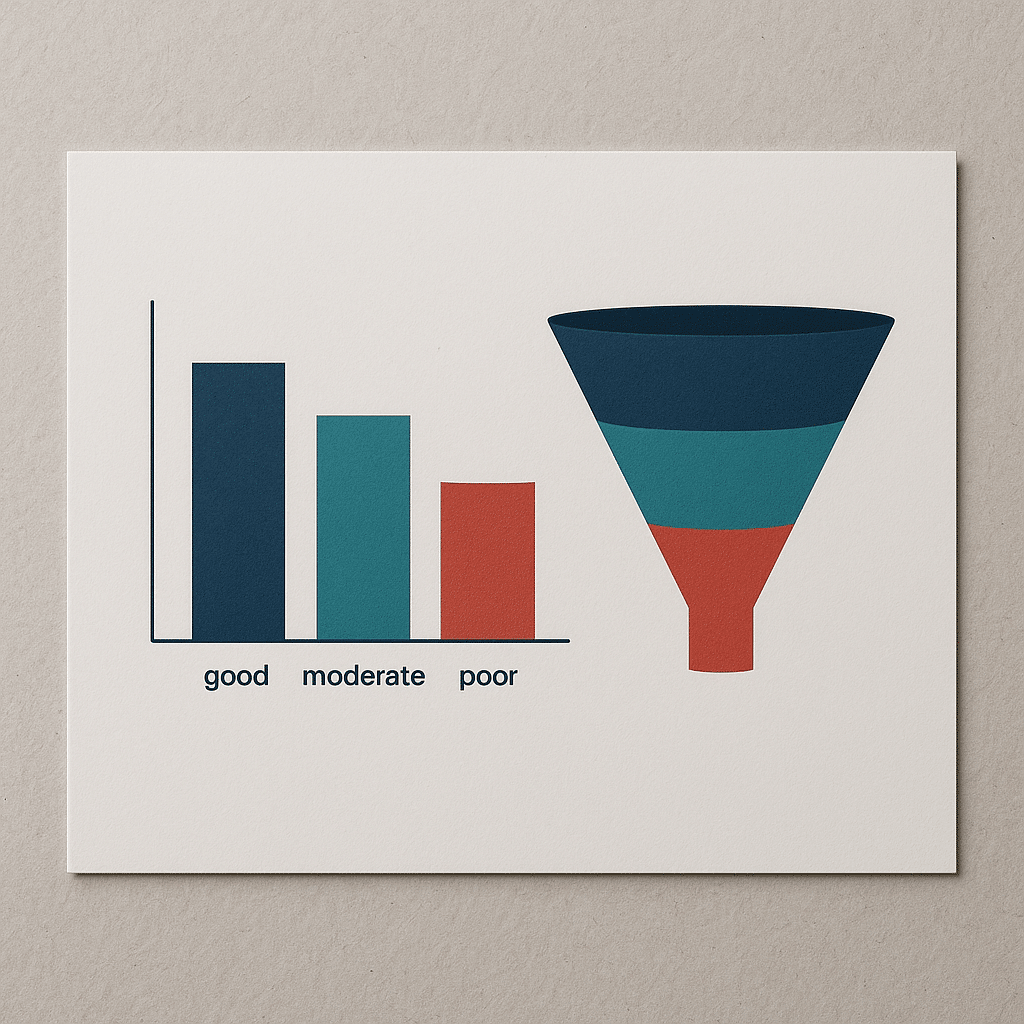

Why Core Web Vitals Matter for Conversion

Core Web Vitals are not a vanity engineering target. They capture user experience in measurable form. If conversion pages fail field thresholds, conversion rates drop even when SEO traffic is healthy.

Short answer: Track Largest Contentful Paint under 2.5 seconds, First Input Delay under 100 milliseconds, and Cumulative Layout Shift under 0.1 on landing and funnel pages, then tie those RUM metrics to conversion event rates.

Why this works: real-user monitoring captures the browser-level experience, including device and network variations. Lab tools like Lighthouse give guidance but don’t reflect real users in low-bandwidth conditions. What actually happens is engineering teams tune for high Lighthouse scores while conversions keep falling because field CLS from third-party embeds shifts CTAs after render.

Good vs bad examples

- Good: prioritize fixes that reduce LCP on high-value landing pages, such as lazy-loading hero images correctly and deferring non-critical CSS, because each 0.5-second improvement in LCP on those pages typically raises engagement and form fills.

- Bad: optimizing the blog index for Lighthouse without auditing conversion landing pages, which leaves revenue pages with blocking third-party scripts causing high FID.

According to Google Search Central, Core Web Vitals form part of the page experience signals that influence organic search behavior. Use field data first, lab tools second.

]

Real-World Scenario: Scaling SEO for a 12-Person B2B Agency

Scenario constraints: a 12-person B2B agency migrating off HubSpot to a modular stack, limited budget to hire developers, sales team closing deals with long sales cycles, and existing site with 120 pages. The CEO wants innovation without blowing the budget.

Short answer: Focus on three things: cluster-based content prioritization, GA4 event export to BigQuery for path attribution, and razor-targeted technical fixes on top 10 commercial pages measured by RUM data.

What we usually see when a small agency does this: they try to rewrite the entire site. That fails. Instead pick the top five commercial pages by assisted conversion potential, instrument them, and run two 6-week experiments: improved content structure plus a technical speed push. Evaluate both with BigQuery reattribution and a 3-6 month horizon.

Tactical steps

- Map content clusters to buyer stages and choose 3 clusters to prioritize.

- Tag micro-conversions and export GA4 to BigQuery to track influence across long sales cycles.

- Run a technical sprint to hit the Core Web Vitals thresholds on the top conversion pages only.

Trade-offs: you will trade breadth for measurable impact. The upside is faster insight cycles and fewer wasted development hours.

Technical SEO Failure Modes That Kill Innovation

Innovation stalls when basic technical hygiene is ignored. Technical experiments must be reproducible and visible to both marketing and engineering.

Short answer: Common failure modes include missing or inconsistent canonical tags producing duplicate content, improper use of noindex on paginated resources, and optimizing only in lab tools without validating with real-user monitoring and Search Console data.

Why these failures cause damage

- Missing canonical tags cause indexing of many near-duplicate URLs, which dilutes ranking signals and wastes crawl budget.

- Noindexing paginated or filtered pages without a restoration plan breaks internal linking signals and reduces the authority passed to leaf pages.

- Optimizing for Lighthouse score alone can create a false sense of progress because lab scores do not represent lower-end devices or slow mobile networks that make up a significant share of organic users.

Failure consequences we have seen in practice: teams roll out a template tweak that increases indexable URLs and, within weeks, see organic sessions fragment across dozens of near-identical pages, reducing the visibility of the main landing page and complicating attribution.

Tools to catch these issues include Screaming Frog for crawl simulation, Google Search Console for index coverage signals, and real-user metric collection via the Chrome User Experience Report or your own RUM pipeline.

How to Evaluate an Agency’s Innovation Credibility

Vendors sell innovation. Buyers verify it. The quickest way to separate substance from spin is to ask targeted operational questions and demand evidence you can test in a week.

Short answer: Ask for a live GA4 BigQuery export sample, a list of recent controlled experiments with success criteria, and access to RUM data for two candidate landing pages. If they refuse, treat that as a red flag.

Questions that reveal competence

- Show me the event taxonomy and BigQuery schema you use to tie content to revenue. If the agency cannot show schema and sample queries, they are likely using surface metrics only.

- Share a reproducible experiment: what was the hypothesis, the control, the variant, the timeframe, and the decision rule. Innovation is repeatable experiments, not anecdotes.

- Provide Search Console and RUM evidence for at least two technical fixes they claim to have shipped.

What to look for in answers

- Specificity: naming tools like GA4, BigQuery, Lighthouse, and Schema.org and describing how they tie together is good. Vague answers are bad.

- Access: an agency that can’t or won’t provide sanitized samples is unlikely to be transparent in a real engagement.

Practical red flags include promises of guaranteed rankings, opaque reporting that only shows top-line sessions, and an exclusive focus on vanity metrics instead of event-to-revenue mapping.

Pros and Cons: Metric-Driven Innovation Versus Vanity Reporting

Decision-makers must choose where to invest attention. Below is a structured pros and cons list that clarifies the operational trade-offs between metric-driven innovation and vanity reporting.

- Metric-Driven Innovation

- Pros: links experiments to business outcomes, forces reproducible tests, reveals hidden bottlenecks in funnels.

- Cons: requires investment in instrumentation, queries, and cross-functional access to product or engineering teams.

- Vanity Reporting

- Pros: quick to produce and easy to communicate to non-technical stakeholders.

- Cons: hides commercial impact, encourages activity without outcomes, and can mislead leadership into allocating budget to low-value work.

Common Misconception: More Pages Equals More Rankings

Many teams treat page count as a proxy for topical dominance. That is incorrect and often counterproductive.

Why it’s wrong: search engines reward consolidated authority around a topic, not a scattershot of low-quality pages. Publishing many shallow pages fragments internal link equity and makes it harder for your best page to rank. In practice we see a site with many low-value pages lose visibility for its primary topic because signals are distributed across dozens of thin pages.

What to do instead: build clusters of 3 to 6 deep assets per high-value topic, use clear canonicalization and internal linking to funnel authority, and measure cluster-level assisted conversions over a 3-6 month period to confirm impact.

Evaluation Checklist: What to Ask and Test in 7 Days

Use this checklist to evaluate any vendor or internal plan in a single week. It’s built for quick, practical verification.

- Request a sanitized GA4 BigQuery export sample for one revenue funnel. Can they show path reattribution queries?

- Ask for RUM data for the two highest-value landing pages. Do LCP, FID, and CLS meet realistic thresholds?

- Get the experiment log: hypothesis, sample size, timeframe, and the exact decision rule used to declare success.

- Review schema usage: are commercial pages marked with schema:Product or schema:Service and properly structured per Schema.org structured data?

- Confirm they can point to Search Console evidence for index changes rather than screenshots alone.

If the vendor passes these tests, they are likely practicing measurable innovation rather than reporting activity.

Next Steps and Practical CTA

Single most useful takeaway: stop treating sessions and rankings as proof of innovation. Prioritize measurable experiments that tie content clusters and technical changes to real conversion events using GA4 event export and RUM data.

If you want a short vendor diagnostic, ask for a sanitized BigQuery export sample and a RUM report on two landing pages. We review those artifacts to produce a 7-point improvement plan.

Try this action: if you have a migration or redesign coming, ensure the plan includes cluster mapping, schema markup, and a Core Web Vitals remediation sprint. If you need help with a migration or a conversion-focused redesign, review case examples at our Website Redesign Company In Florida and consider a targeted landing sprint from our Landing Page Design Agency In Florida.

For tactical marketing stacks and messaging alignment that support measurement, our Brand Messaging Consulting Services In Arizona and SEO Agency In Florida pages explain how we operationalize these tests. For creative assets that move experiments faster, see our Graphic Design Agency In Arizona.

Frequently Asked Questions

What Is the Fastest Way to Link SEO Work to Revenue?

Short answer: export GA4 event-level data to BigQuery, build a path reattribution query that assigns weighted credit across sessions, and tie those credits to closed deals in your CRM for a multi-touch view of SEO influence.

To go deeper: this requires consistent event naming and common identifiers between site events and CRM records. The main trade-off is engineering time to maintain the export and the queries. The payoff is clearer investment decisions and fewer false-positive content wins.

How Long Should I Wait to Judge a Content Experiment?

Short answer: allow a minimum 3 to 6 month observation window for content experiments that target consideration and decision stages due to multi-session buyer journeys and delayed attribution.

To go deeper: short windows misattribute seasonal or promotional fluctuations to content changes. Use cohort analysis and maintain the same promotion channels during the test period to isolate the content effect. If your sales cycle is longer than three months, expect longer windows.

Are Lab Scores or Field Metrics More Important for SEO?

Short answer: field metrics win for prioritization because they show real user experience across devices and networks, while lab scores are useful for debugging but not for prioritizing fixes.

To go deeper: lab tools like Lighthouse help locate specific bottlenecks. But a lab improvement that doesn’t change RUM metrics probably won’t move conversions. Use lab tools to produce fixes and validate with RUM deployment checks.

What Questions Should I Ask Before Hiring an SEO Agency?

Short answer: ask for a sanitized GA4 BigQuery sample, examples of repeatable experiments with decision rules, and access to RUM data for two landing pages they claim to have improved.

To go deeper: a credible agency will show the event taxonomy, sample SQL queries, Search Console evidence, and experiment logs. If their reports are limited to sessions and rankings, they are likely focused on vanity metrics rather than driven tests.

How Do I Reduce Crawl Waste Without Losing Index Coverage?

Short answer: audit URL parameter usage, enforce canonicalization, and apply robots rules selectively, while monitoring index coverage in Google Search Console to ensure important pages remain indexable.

To go deeper: excessive parameterized URLs inflate crawl volume and scatter signals. Use canonical tags and consistent internal linking. If you block via robots, confirm via Search Console that blocked pages are not important for discovery.

Can Structured Data Improve Conversion or Just Rankings?

Short answer: structured data helps search engines present richer results which can increase qualified clicks, but its real value is improving result relevance and enabling enhanced SERP features that bring higher-intent visitors.

To go deeper: apply Schema.org types appropriate to your pages and validate them with Google’s Rich Results Test. Structured data alone won’t guarantee a feature but it increases eligibility, which can materially change click composition and conversion quality.