Digital Transformation in SEO & Digital Marketing: Key Metrics That Matter

Digital transformation is measurable when you couple technical telemetry with commercial outcomes: track Core Web Vitals and crawl/index signals, then map those to sales-accepted leads inside your CRM. By the end you can pick 3 operational KPIs to tie to revenue and a repeatable 2-6 week measurement cadence to rebaseline performance.

You will be able to decide which metrics to prioritize for your business, set a practical cadence to measure progress, and interrogate vendors for the signals that reveal competence. This is not high-level theory — it’s a playbook framed around how SEOs and digital teams actually ship transformations and how they verify impact.

How to Measure Digital Transformation Progress

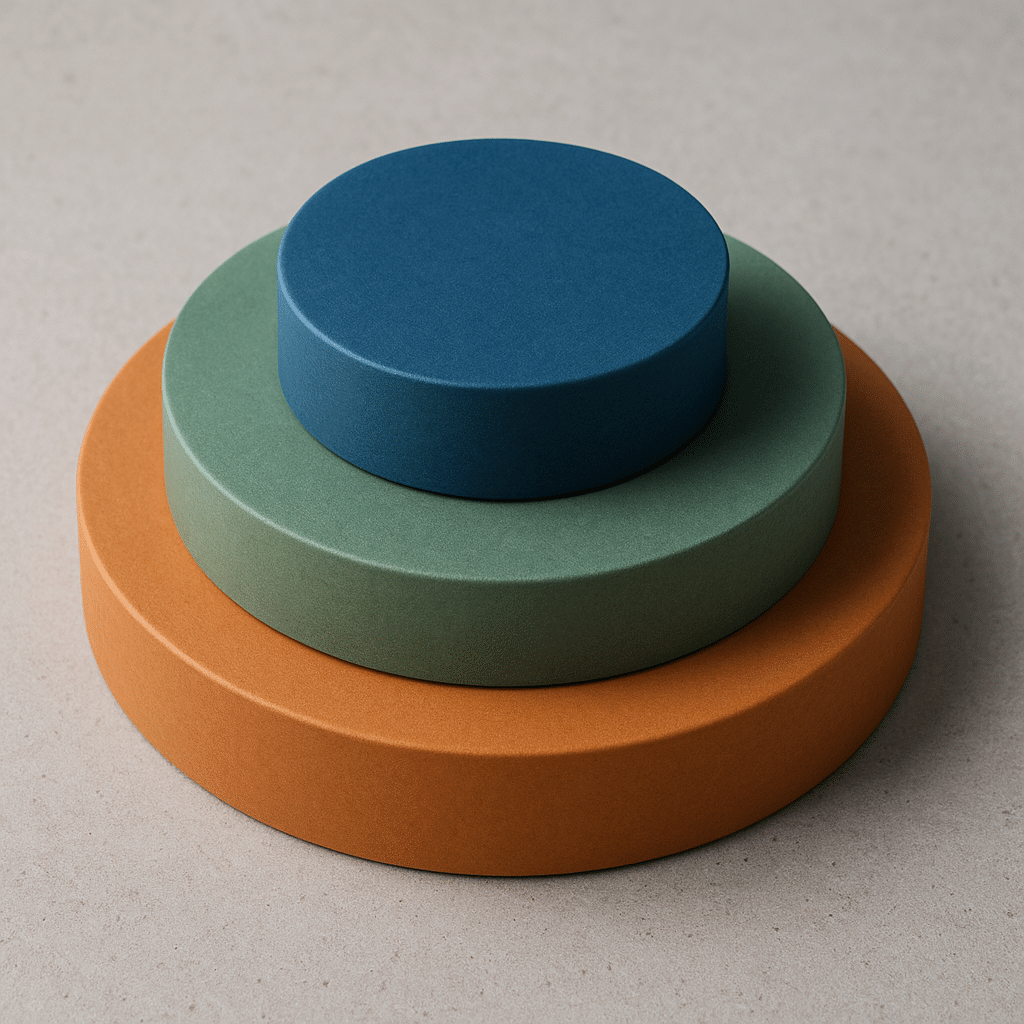

Most teams track traffic and call it transformation. That’s incomplete. What matters is whether platform changes improve discoverability, user experience, and commercial conversion — and whether those improvements persist after implementation. Measure three layers: discovery, experience, and commercial conversion. Each layer must be observable and attributed.

Short answer: Use discovery metrics from Google Search Console, experience metrics from Core Web Vitals and Lighthouse, and commercial metrics from CRM-source mapping to define progress. Rebaseline every 2-6 weeks during active migration or rollout.

Why this works: discovery shows whether search engines can find the content, experience determines whether users stay and interact, and commercial conversion tells you whether those interactions become value. In practice, when we isolate those three layers and force a single data-owner for each, rollout friction collapses. Good looks like stable or improving index coverage, LCP under the acceptable threshold, and increasing sales-accepted leads tied to organic channels. Bad looks like traffic volatility without matching CRM gain, or improvements in lab metrics that don’t show conversion changes.

KPIs That Actually Predict Revenue

Pick forward-looking KPIs that correlate with conversion, not vanity numbers. Here are the ones to prioritize and why they forecast revenue.

- Index Coverage Health from Google Search Console. Why it matters: a shrinking valid index or rising excluded pages means lost visibility for intent pages. Good vs bad: persistent ‘noindex’ at the page level for high-intent URLs is a red flag.

- Core Web Vitals — Largest Contentful Paint and Cumulative Layout Shift. Why it matters: these affect bounce rates on revenue pages. Thresholds to watch: LCP under the suggested threshold and CLS near zero are the operational targets used by search engines and user experience audits.

- Organic Sales-Accepted Leads (SALs) mapped via UTM to CRM. Why it matters: raw form-submissions overstate value. What we usually see is that reclassifying leads to SALs changes prioritization of SEO work.

- Click-to-Contact Time on landing pages. Why it matters: friction between landing and contact often kills conversions; measure seconds to first actionable element and aim to reduce it.

Tools that make this measurable: Google Analytics 4 for event-driven metrics, Google Search Console for index signals, Screaming Frog for crawls, and Lighthouse for lab UX checks. Tie GA4 events into your CRM using UTM and a server-side ingestion where possible so you can answer which organic pages produced closed-won business.

Technical Metrics to Watch During Migration

Migrations and platform changes are where digital transformation most often breaks. The technical checks below are the ones that stop common failure modes.

Why they matter and how to measure them:

- Canonicalization and Hreflang Signals. How it fails: mismatched canonical tags or incorrect hreflang pairs fragment indexation. Consequence: duplicated content and diluted rankings for revenue pages.

- Redirect Chains and Response Codes. How it fails: long redirect chains increase page load and lose link equity. Good looks like one-step 301/302 redirects and no 5xx spikes after deploy.

- Sitemap Accuracy and Lastmod Alignment. How it fails: sitemaps that list blocked or noindexed pages confuse crawlers. Use automated validation to ensure sitemap entries match live canonical URLs.

- Crawl Budget Signals. How it fails: leaving thousands of parameterized or printer pages crawlable wastes attention on low-value URLs. Fix by consolidating low-value content and using robots directives and canonicalization. We often reclassify up to 20 percent of low-value URLs during a migration to free crawl budget for commercial pages.

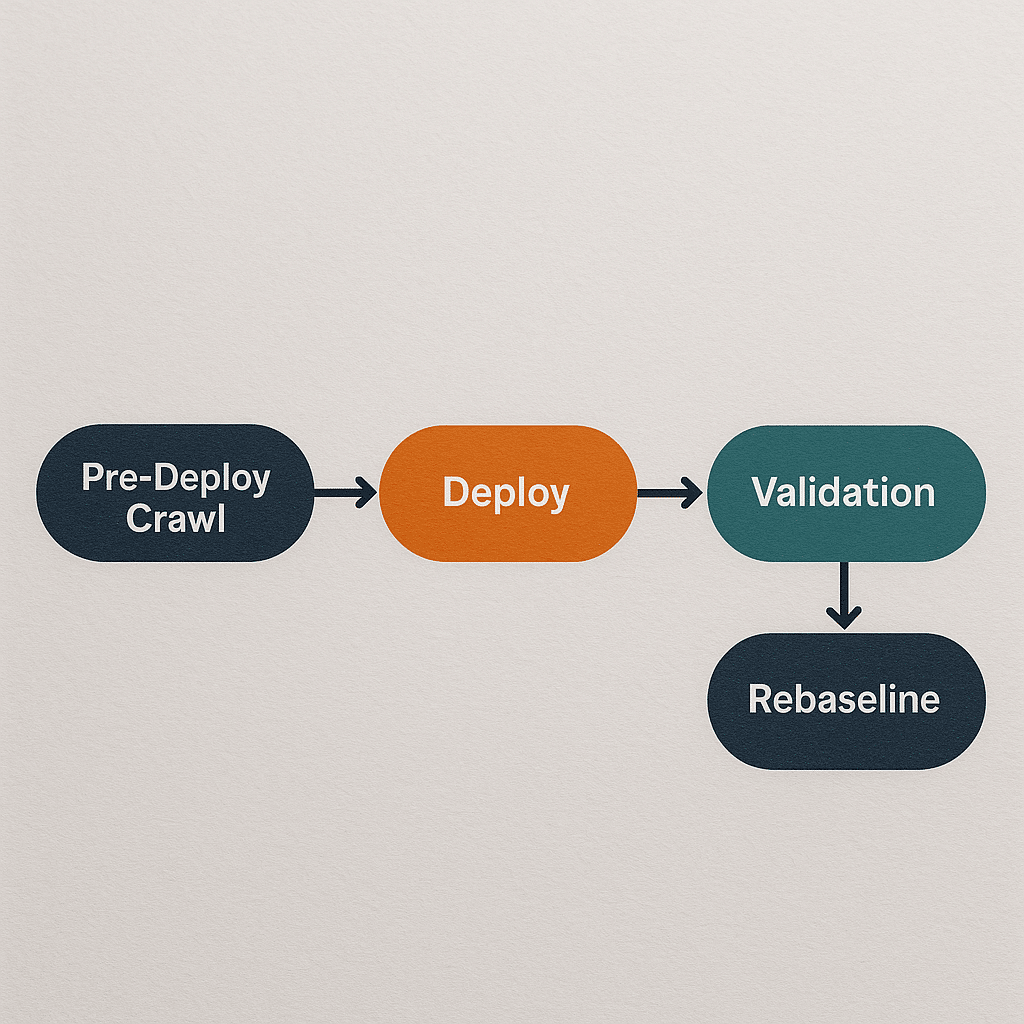

Operational thresholds we adopt: run a pre-deploy crawl and a post-deploy crawl, then compare unique canonical URL counts and indexable status. If indexable URL count changes by more than a small percentage, stop and investigate. Typical rebaseline cadence after a migration is 2-6 weeks — that is the window in which search engines settle redirects and index signals for most sites.

Organizational Signals That Break Transformation

Technology can be perfect, but organizations still fail. What actually happens is teams ship features without owning the metrics. Here are the organizational failure modes and why they break outcomes.

- Siloed Ownership. Cause and effect: marketing says it’s the dev team’s problem when Core Web Vitals are poor, devs call it a design issue, and nobody fixes conversion friction. Consequence: incremental improvements stall.

- No Sales Mapping. Cause and effect: marketing reports lead counts while sales ignores them. Consequence: investment flows to activities that increase form fills but not sales-accepted leads.

- Vendor Misalignment. Cause and effect: using multiple vendors with overlapping responsibility for tracking and tagging causes event duplication and attribution drift. What good looks like: one owner for analytics and one for tagging governance, using documented event taxonomy.

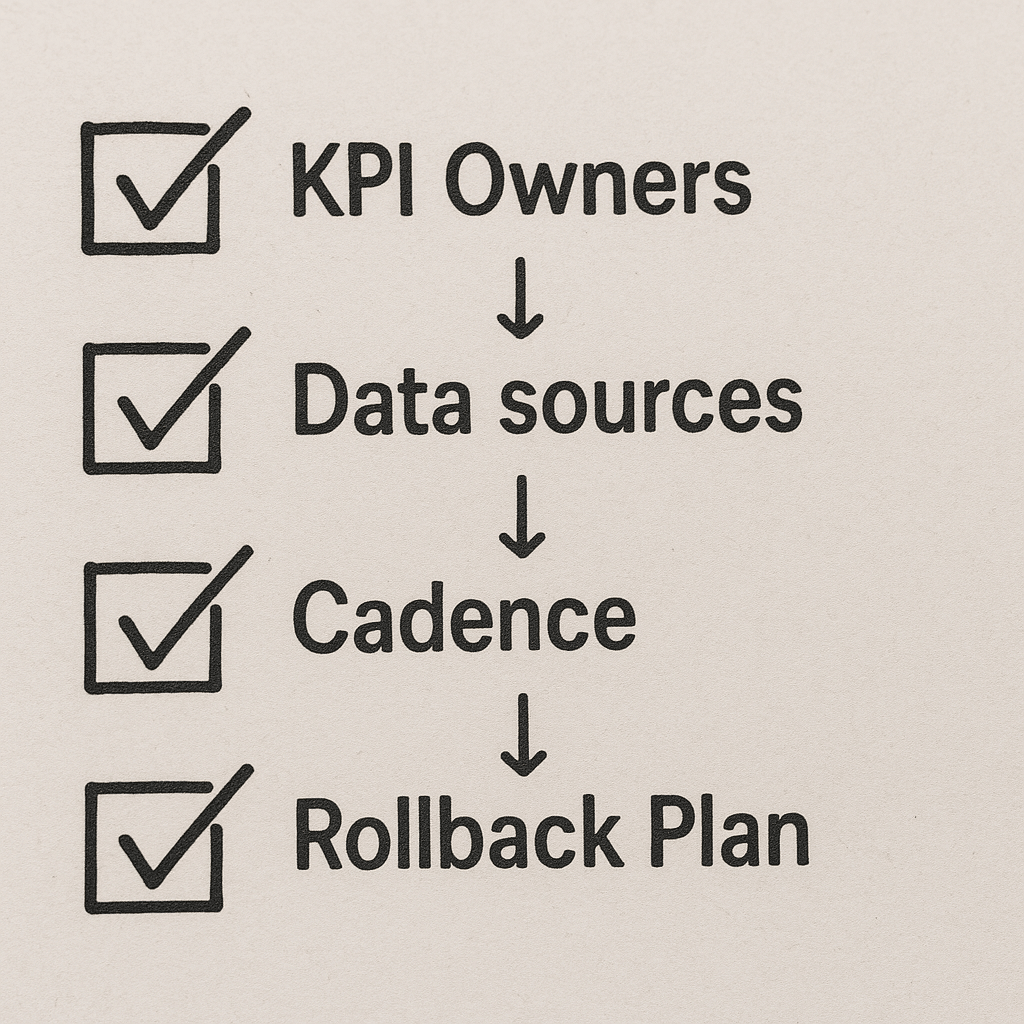

What we usually see when transformation succeeds is an agreed measurement charter — a short document naming the KPI owners, the canonical sources of truth, and the rebaseline cadence. That simple artifact prevents finger-pointing after a migration fails.

Real-World Scenario: 30-Page E-Commerce Migration with Constraints

Scenario: A 30-page proprietary product catalog for a small manufacturer in Phoenix, limited dev hours (less than 40), and a hard 6-week trade show deadline where the site must be live. Budget is constrained and the team has one marketer and two developers.

Why this scenario matters: small catalogs are common and they break when migrations are rushed. Here’s an actionable plan and what to watch for.

- Pick a Minimal Viable Migration: focus on revenue pages only. That means preserving canonical URLs for the 12 highest-traffic product pages and postponing lower-priority pages to a second sprint. Effect: reduces migration scope and risk.

- Preflight Crawl: run Screaming Frog to capture current canonical, meta, and index status. Export a delta sheet the dev can use to map redirects — this is how you avoid redirect chain failures, and it reduces dev hours.

- Server-Side Tagging For Analytics: implement a lightweight server-side GTM container to preserve UTM fidelity and avoid client-side tracking breaks during the migration. This protects your organic SAL attribution on day one.

- Two-Week Rebaseline: after go-live, measure index coverage, LCP, and SALs at two-week intervals for up to 8 weeks. If index coverage drops for revenue pages, roll back the migration for those specific URLs until remediated.

Failure mode and consequence for this scenario: skipping the preflight crawl or mapping redirects incorrectly will create redirect chains and canonical mismatches, which in practice lead to immediate visibility loss for converted pages and a measurable drop in sales inquiries during the trade show — a high-cost operational failure given the deadline.

Checklist to Evaluate Vendor Quality

When hiring an agency or contractor for digital transformation, ask questions that reveal operational competence, not marketing-speak. Here’s a checklist you can use to evaluate quality quickly.

- Can you see their pre-deploy and post-deploy crawl reports? Ask for an example redline that shows canonical, redirect, and index status comparison. Good answer: they provide a crawl delta and explain remediation items.

- What is your tracking governance? Ask whether they use server-side tagging, event taxonomies, and how they handle UTM to CRM mapping. Good answer: they name Google Analytics 4, explain event schemas, and show a CRM mapping table.

- How do you validate Core Web Vitals across devices? Look for mention of field data (CrUX, Search Console) plus lab checks (Lighthouse). Bad answer: only lab reports with no field coverage.

- What is your rollback plan for search regressions? A competent vendor will have a rollback checklist and automated monitoring alerts tied to index coverage or landing page impressions.

- Can they show one case where their taxonomy change outperformed a backlink campaign? Vendors who claim success should show the before/after KPI mapping to revenue or SALs rather than just traffic graphs.

Red flags: no post-deploy monitoring, no CRM mapping, or refusal to hand over crawl exports. If they balk, assume you will be left holding the problem when search visibility drops.

Practical Evaluation Checklist and Next Steps

Use this short checklist to evaluate current transformation progress or to vet vendors. Each item is actionable and repeatable.

- Confirm canonical and redirect delta between pre-deploy and post-deploy crawls.

- Verify Core Web Vitals for top 10 revenue pages using field data (CrUX) and Lighthouse lab runs.

- Map top organic landing pages to sales-accepted leads in your CRM and confirm event-level attribution via server-side tagging.

- Ensure sitemap and robots.txt reflect only indexable content for commercial pages.

- Require a rollback trigger: if organic impressions for revenue pages fall by a material margin within two weeks, vendor executes rollback for those pages.

Next step recommendation: run a two-week smoke test where you pick three revenue pages, implement the vendor’s changes in a staging environment, and compare discovery, experience, and SALs before and after. That small experiment exposes both technical competence and measurement discipline quickly.

Need help implementing a test or choosing which metrics to tie to sales? DIQSEO’s cross-disciplinary teams can run the preflight crawl and set up CRM mapping for small to medium businesses — for example, we often pair a site migration with targeted landing page design work or a refreshed brand messaging audit from our brand messaging consulting practice to protect conversion velocity. If you’re running local campaigns, consider complementing SEO with targeted Google AdWords management or paid social advertising to stabilize leads while search reindexes.

For visual asset work tied to better conversion, our graphic design and website redesign services can be scoped to the 2-6 week window so you don’t miss trade show or promotional deadlines. If you want fast, short-format creative to support traffic during transformation, our short form video services are a lightweight parallel channel.

Frequently Asked Questions

What Is the Fastest Way to Detect a Search Regression After a Deploy?

Short answer: Run an immediate post-deploy crawl, compare canonical and indexable URL counts to the pre-deploy crawl, and watch Search Console impressions for your top revenue pages daily for two weeks.

To go deeper, use Screaming Frog to create a canonical mapping export pre-deploy and post-deploy, then filter where canonical URLs changed or where redirect chains appeared. Combine that with daily Search Console reports on impressions and clicks for the affected pages. If impressions drop and canonical mapping changed, you’ve found your regression vector and should roll back that change.

How Should I Tie SEO Work to Sales Metrics?

Short answer: Map organic landing pages to CRM lead records using consistent UTM parameters and server-side ingestion so you can report sales-accepted leads by landing page and campaign.

To go deeper, create an event taxonomy so every conversion action (form submit, phone click, chat start) emits the same UTM payload and page identifier. In practice, this reveals which pages produce sales-ready conversations and which only produce low-value form fills. Prioritize work on pages that produce sales-accepted leads.

Which Tools Are Non-Negotiable for a Migration?

Short answer: A crawler (Screaming Frog), Google Search Console, a field performance source for Core Web Vitals (CrUX via Search Console), and a CRM-integrated analytics pipeline (GA4 with server-side tagging) are the baseline tools.

To go deeper, you also want Lighthouse for lab tests, an SEO log analyzer if you have scale, and a redirect mapping spreadsheet exported from the crawler. Vendors who can’t produce pre/post crawl outputs and a CRM mapping table are risky hires.

How Long Before I See Organic Gains After Reorganizing Internal Linking?

Short answer: Expect search engines to re-evaluate internal link signals over a 2-8 week window; improvements on prioritized pages often appear within that timeframe if index coverage is stable.

To go deeper, concentrate link equity by reducing click depth to two clicks from the homepage for revenue pages and adding contextual hub pages. In practice, internal linking changes combined with a clean sitemap can outpace low-quality backlink campaigns for certain queries.

Are Core Web Vitals Still Worth Prioritizing?

Short answer: Yes — they represent real user experience signals and can materially affect bounce and engagement on revenue pages, so treat them as part of your transformation telemetry.

To go deeper, monitor LCP and CLS using field data where possible and use Lighthouse to iterate fixes. Prioritize fixes on high-conversion pages first. If you only run lab tests, you miss real-user variance that matters for commercial outcomes.

What Are the Most Dangerous Red Flags from an Agency Pitch?

Short answer: Promises to “double traffic” without showing CRM mappings, refusal to hand over crawl exports, and no rollback plan are the clearest red flags.

To go deeper, ask for a candid case study showing technical remediation, the exact KPIs they changed, and how those KPIs mapped to commercial outcomes. Real vendors will show before/after crawl diffs and a measurement charter; those who avoid this are hiding process risk.

Authoritative References

For Core Web Vitals guidance, consult the official documentation on Web Vitals. For indexation and Search Console signals, see Google Search Central. For accessibility and content structure best practices, refer to W3C WAI standards.

Conclusion: The Single Most Useful Takeaway

Don’t treat digital transformation as a set of disconnected tasks. Pick three measurable KPIs across discovery, experience, and commercial conversion; assign owners; and enforce a 2-6 week rebaseline cadence during active change. That small discipline separates successful transformations from costly regressions.

If you want a practical next step, run a 2-week smoke test on three revenue pages with pre/post crawl, Core Web Vitals monitoring, and CRM mapping. That experiment tells you in days whether a vendor or change is safe to scale.